GPT-3 also known as the third generation Generative Pre-trained Transformer, is a neural machine learning model trained with the internet data to generate any type of text developed by OpenAI. It’s capable of generating human-like text and has a wide range of applications, including translation, language modeling and generating text for applications such as chatbots.

In less corporate terms, GPT-3 gives the user the ability to give a trained AI a wide range of worded prompts. Whether they are questions, requests or writing a text on a certain topic.

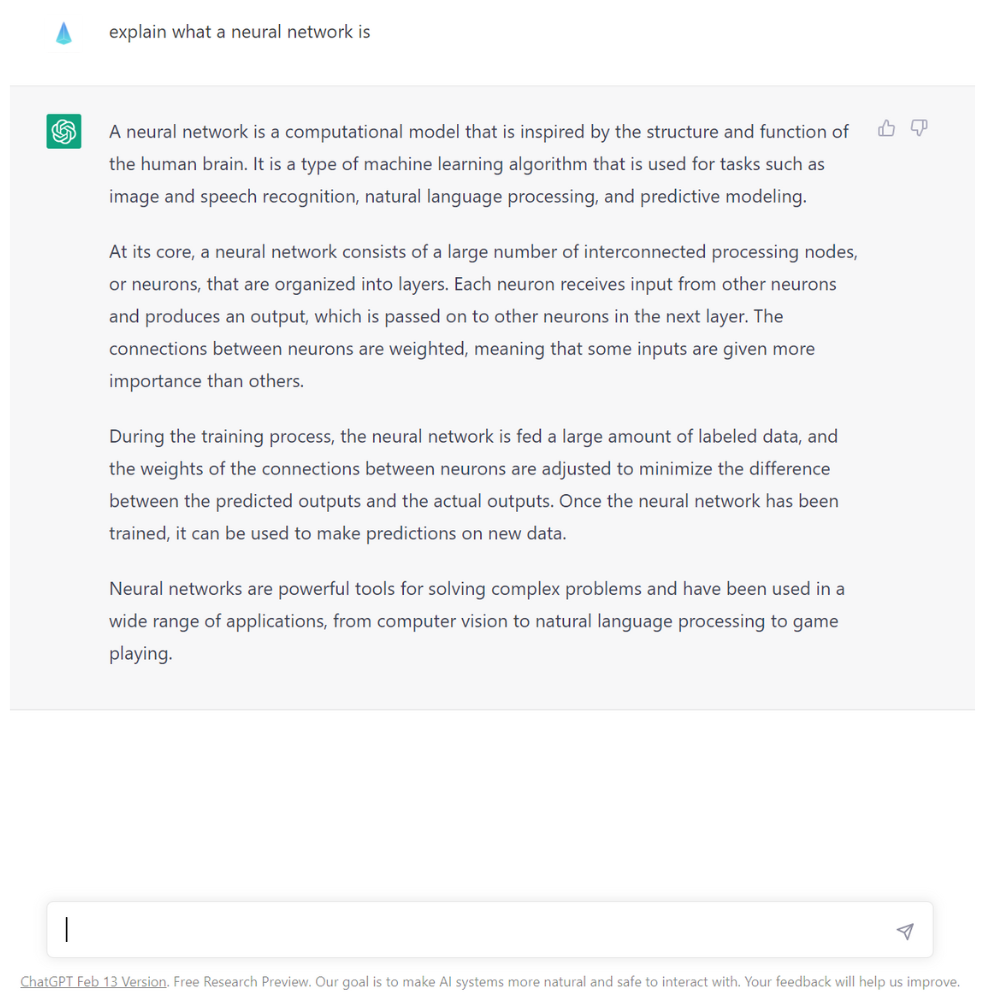

Its most common use so far is creating ChatGPT, which is a highly capable chatbot.

Before going any further, it’s important that we really understand what is the difference between GPT-3 and ChatGPT.

● GPT-3: last model from OpenIA, GPT-3 is a more general-purpose model that can be used for a wide range of language-related tasks.

● ChatGPT: specifically designed for conversational tasks and is trained on a smaller amount of data compared to GPT3 which may affect its ability to generate diverse and nuanced responses.

Today, GPT-3 is really famous as it is considered as the most powerful deep learning neural network. GPT-3 now works with 175 billion machine learning parameters.

To put things in perspective, the largest trained language model before GPT-3 was Microsoft Turing NLG Model which had 10 billion parameters. So since early 2021, GPT-3 is the largest neutral network ever produced. As a result, it is better than any prior model for producing text that is convincing enough to seem like a human could have written it.

But how does it work? GPT-3 is a language prediction model, this means that it has a neutral network machine learning model that can take texts as an input and transform it into what it predicts the most useful result will be.

This is accomplished by training the system on a vast body of internet text (for example, Wikipedia is entirely absorbed by GPT-3).

It is the first time that we create a computing power that is both generic and qualitative. This allows GPT-3 to answer a wide range of questions. Generating human understandable content is a challenge for machines that don’t really know all the complexities and nuances of language. However, using text on the internet, GPT-3 is trained to generate realistic human text.

GPT-3 has been used to create articles, poetry, stories, news reports and dialogue using just a small amount of input text that can be used to produce large amounts of quality copy.

It is also being used for automated conversational tasks such as responding to any text that a person types into the computer with a new piece of text appropriate to the context. The strength of GPT-3 is that it can create anything with a text structure, not just a human language text but also automatically generate text summarizations and in a certain way program code. Let’s be honest, GPT-3 is not a coding tool and might never be, but it can generate very simple lines of coding.

So how to use it as a company?

For example, customer service centers can use GPT-3 to answer customer questions or support chatbots; sales teams can use it to connect with potential customers; and marketing teams can write copy using GPT-3.

Amongst other things, it can:

● Monitoring and analyzing social media conversations and sentiments

● Generating an annual social media content calendar including image, text, video…

● Automating the scheduling of social media posts

● Creating captions and subtitles for videos

● Monitoring and analyzing website traffic

● Generate ad campaigns strategies

● Identifying potential crisis and generating response plans

● Drafting and sending unique personalized emails

● Creating entertaining video content with deepfake and voice cloning

● Pitching news to reporters and following up on Twitter

It is considered as a magic wand for all social media tasks today. But let’s be clear, it has its pitfalls In the wrong hands, GPT-3 could be a powerful tool to generate armies of trolls. Tomorrow, it could enable the creation of fake reviews for social media accounts with the right vocabulary, syntax and even emojis, at scale.

While GPT-3 is remarkably large and powerful, it has several limitations and risks associated with its usage. The biggest issue is that GPT-3 is not constantly learning. It has been pre-trained, which means that it doesn't have an ongoing long-term memory that learns from each interaction. In addition, GPT-3 suffers from the same problems as all neural networks: their lack of ability to explain and interpret why certain inputs result in specific outputs.

Let’s face it, even if it is a very clever machine learning algorithm, it still is limited. It will always answer a question or create a text by just guessing what word should come next. The same way when you write a text message and your smartphone gives you 3 word choices to go next the previous one. We all consider GPT-3 as a creative and innovative tool. While it is innovative, we cannot really say that it is creative. GPT-3 was not built to be creative but to be knowledgeable.